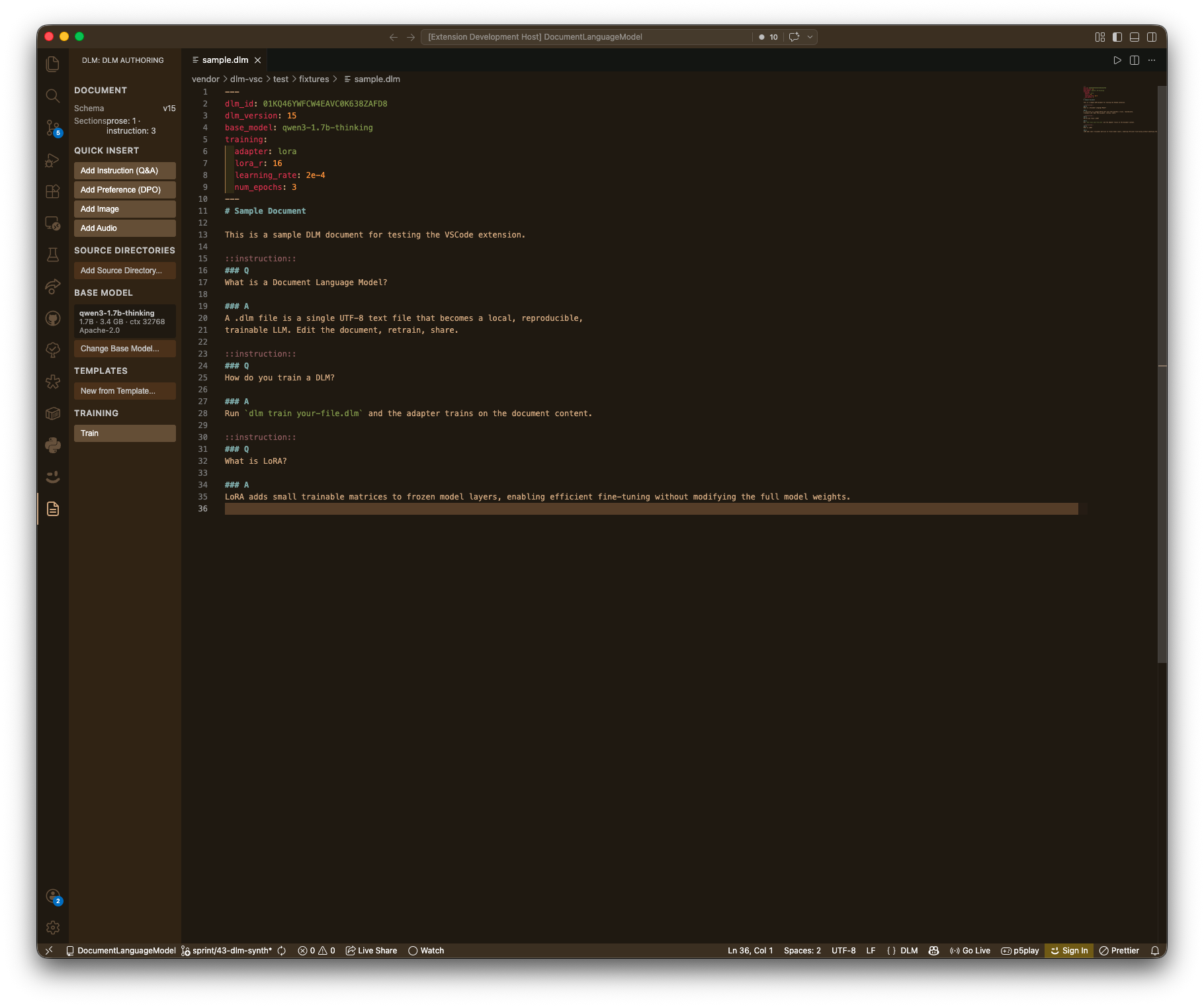

DLM — Document Language Model

Turn a text file into a fine-tuned LLM. Edit the document, retrain, share.

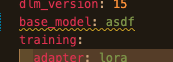

A .dlm file is YAML frontmatter + markdown body with ::instruction:: and ::preference:: sections. Train a LoRA adapter on your document, prompt it, export to Ollama. This extension makes .dlm authoring a first-class editor experience.

Features

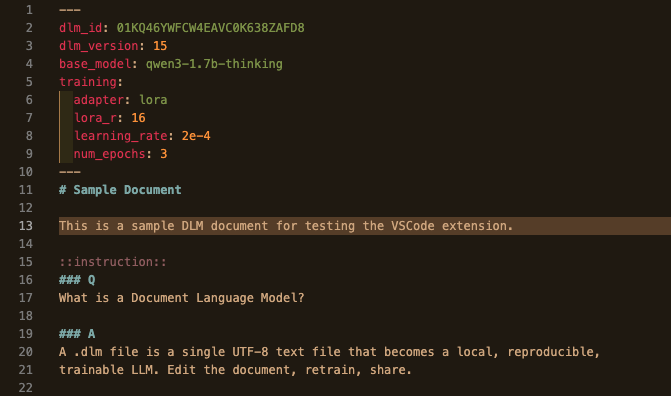

Syntax-aware editing

YAML frontmatter, markdown body, and section fences (::instruction::, ::preference::, ::image::, ::audio::) get distinct, theme-aware highlighting. Q/A headers inside instruction blocks are styled.

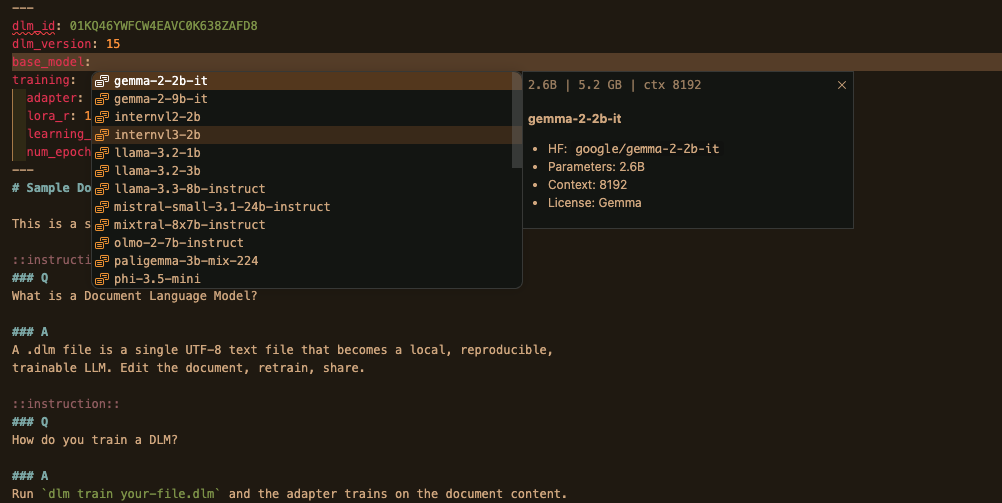

Smart completions

Autocomplete for the 26-entry base-model registry, adapter types, quantization levels, and section fences — all driven by the live schema.

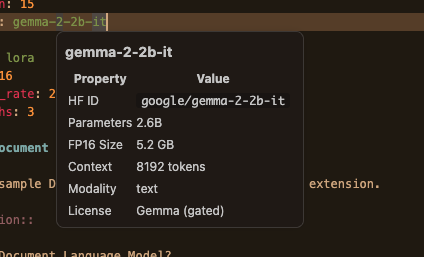

Hover insight

Hover any base-model key to see params, VRAM footprint, context length, modality, and license. Hover a section fence for the section ID and quick stats.

Live diagnostics

Schema errors, unknown base models, and Doctor warnings (VRAM headroom, unsafe combinations) surface inline as you type — no save required.

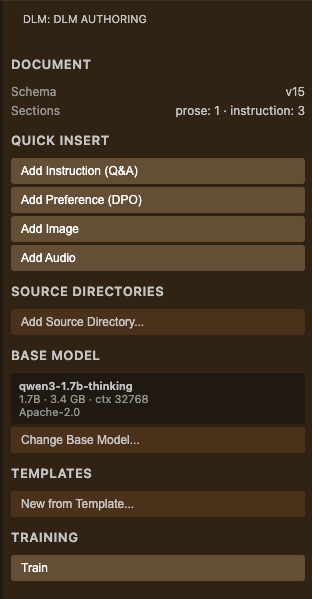

Side panel — frictionless authoring

A dedicated DLM activity-bar view with everything you need to compose a .dlm:

- Quick Insert — Instruction, Preference, Image, and Audio buttons drop snippet templates with tab stops at the cursor

- Source Directory Manager — native folder picker; relative paths computed and inserted into frontmatter via the LSP

- Base Model Browser — searchable QuickPick over the full registry; click to set

base_model - Template Gallery — 8 bundled templates with one-click bootstrap

- Document Overview — section counts, store status, adapter version

- Training Controls — Run / Stop in an integrated terminal

Transparent store creation

Open a .dlm file. The content-addressed store at ~/.dlm/store/<dlm_id>/ is created on the spot — no manual dlm init needed.

Requirements

-

Python 3.11+ with the

document-language-modelpackage:pip install document-language-model -

dlm-lsplanguage server:pip install dlm-lsp -

(Optional) For training, choose a hardware-appropriate base. The Doctor panel will tell you what fits.

Quick start

- Install the extension.

- Create a new file with a

.dlmextension. The side panel becomes available immediately. - Click Add Instruction in the side panel and fill in the Q / A.

- Open the Base Model Browser and pick a small base (e.g.

qwen2.5-0.5b) for a fast first run. - Run

DLM: Train Current Documentfrom the command palette (⇧⌘P / Ctrl+Shift+P). - Run

DLM: Promptto chat with your trained adapter. - Run

DLM: Exportto package an Ollama-readyModelfile + GGUF.

Commands

| Command | What it does |

|---|---|

DLM: Train Current Document |

Fine-tune the LoRA adapter on the open .dlm |

DLM: Export |

Export base.gguf + adapter.gguf + Modelfile |

DLM: Synth Instructions |

Generate synthetic Q/A pairs from your sources |

DLM: Show Run History |

Browse training runs for the current document |

DLM: Open Store Directory |

Reveal ~/.dlm/store/<id> in the file manager |

DLM: Insert Instruction Section |

Drop an instruction block at the cursor |

DLM: Insert Preference Section |

Drop a preference block at the cursor |

Settings

| Setting | Default | Description |

|---|---|---|

dlm.command |

uv run dlm |

Command to invoke the dlm CLI (use dlm for system venv) |

dlm.home |

"" |

Override the ~/.dlm store root |

dlm.defaultBase |

"" |

Default base_model for new documents |

dlm.watchOnSave |

false |

Auto-retrain on save |

dlm.lspPath |

dlm-lsp |

Path to the dlm-lsp binary |

If dlm is on your PATH (e.g. installed system-wide), set dlm.command to dlm. The default uv run dlm works inside a uv-managed project.

Multi-editor support

The language server is editor-agnostic. Cookbook entries for Zed, Helix, and Neovim live in the main repository.

Links

License

MIT

View source

| 1 | # DLM — Document Language Model |

| 2 | |

| 3 | > Turn a text file into a fine-tuned LLM. Edit the document, retrain, share. |

| 4 | |

| 5 | A `.dlm` file is YAML frontmatter + markdown body with `::instruction::` and `::preference::` sections. Train a LoRA adapter on your document, prompt it, export to Ollama. This extension makes `.dlm` authoring a first-class editor experience. |

| 6 | |

| 7 |  |

| 8 | |

| 9 | ## Features |

| 10 | |

| 11 | ### Syntax-aware editing |

| 12 | |

| 13 | YAML frontmatter, markdown body, and section fences (`::instruction::`, `::preference::`, `::image::`, `::audio::`) get distinct, theme-aware highlighting. Q/A headers inside instruction blocks are styled. |

| 14 | |

| 15 |  |

| 16 | |

| 17 | ### Smart completions |

| 18 | |

| 19 | Autocomplete for the 26-entry base-model registry, adapter types, quantization levels, and section fences — all driven by the live schema. |

| 20 | |

| 21 |  |

| 22 | |

| 23 | ### Hover insight |

| 24 | |

| 25 | Hover any base-model key to see params, VRAM footprint, context length, modality, and license. Hover a section fence for the section ID and quick stats. |

| 26 | |

| 27 |  |

| 28 | |

| 29 | ### Live diagnostics |

| 30 | |

| 31 | Schema errors, unknown base models, and Doctor warnings (VRAM headroom, unsafe combinations) surface inline as you type — no save required. |

| 32 | |

| 33 |  |

| 34 | |

| 35 | ### Side panel — frictionless authoring |

| 36 | |

| 37 | A dedicated DLM activity-bar view with everything you need to compose a `.dlm`: |

| 38 | |

| 39 | - **Quick Insert** — Instruction, Preference, Image, and Audio buttons drop snippet templates with tab stops at the cursor |

| 40 | - **Source Directory Manager** — native folder picker; relative paths computed and inserted into frontmatter via the LSP |

| 41 | - **Base Model Browser** — searchable QuickPick over the full registry; click to set `base_model` |

| 42 | - **Template Gallery** — 8 bundled templates with one-click bootstrap |

| 43 | - **Document Overview** — section counts, store status, adapter version |

| 44 | - **Training Controls** — Run / Stop in an integrated terminal |

| 45 | |

| 46 |  |

| 47 | |

| 48 | ### Transparent store creation |

| 49 | |

| 50 | Open a `.dlm` file. The content-addressed store at `~/.dlm/store/<dlm_id>/` is created on the spot — no manual `dlm init` needed. |

| 51 | |

| 52 | ## Requirements |

| 53 | |

| 54 | 1. **Python 3.11+** with the `document-language-model` package: |

| 55 | |

| 56 | ```bash |

| 57 | pip install document-language-model |

| 58 | ``` |

| 59 | |

| 60 | 2. **`dlm-lsp` language server**: |

| 61 | |

| 62 | ```bash |

| 63 | pip install dlm-lsp |

| 64 | ``` |

| 65 | |

| 66 | 3. *(Optional)* For training, choose a hardware-appropriate base. The Doctor panel will tell you what fits. |

| 67 | |

| 68 | ## Quick start |

| 69 | |

| 70 | 1. Install the extension. |

| 71 | 2. Create a new file with a `.dlm` extension. The side panel becomes available immediately. |

| 72 | 3. Click **Add Instruction** in the side panel and fill in the Q / A. |

| 73 | 4. Open the **Base Model Browser** and pick a small base (e.g. `qwen2.5-0.5b`) for a fast first run. |

| 74 | 5. Run `DLM: Train Current Document` from the command palette (⇧⌘P / Ctrl+Shift+P). |

| 75 | 6. Run `DLM: Prompt` to chat with your trained adapter. |

| 76 | 7. Run `DLM: Export` to package an Ollama-ready `Modelfile + GGUF`. |

| 77 | |

| 78 | ## Commands |

| 79 | |

| 80 | | Command | What it does | |

| 81 | | --------------------------------- | ---------------------------------------------- | |

| 82 | | `DLM: Train Current Document` | Fine-tune the LoRA adapter on the open `.dlm` | |

| 83 | | `DLM: Export` | Export `base.gguf + adapter.gguf + Modelfile` | |

| 84 | | `DLM: Synth Instructions` | Generate synthetic Q/A pairs from your sources | |

| 85 | | `DLM: Show Run History` | Browse training runs for the current document | |

| 86 | | `DLM: Open Store Directory` | Reveal `~/.dlm/store/<id>` in the file manager | |

| 87 | | `DLM: Insert Instruction Section` | Drop an instruction block at the cursor | |

| 88 | | `DLM: Insert Preference Section` | Drop a preference block at the cursor | |

| 89 | |

| 90 | ## Settings |

| 91 | |

| 92 | | Setting | Default | Description | |

| 93 | | -------------------- | ------------- | ---------------------------------------------------------- | |

| 94 | | `dlm.command` | `uv run dlm` | Command to invoke the dlm CLI (use `dlm` for system venv) | |

| 95 | | `dlm.home` | `""` | Override the `~/.dlm` store root | |

| 96 | | `dlm.defaultBase` | `""` | Default `base_model` for new documents | |

| 97 | | `dlm.watchOnSave` | `false` | Auto-retrain on save | |

| 98 | | `dlm.lspPath` | `dlm-lsp` | Path to the `dlm-lsp` binary | |

| 99 | |

| 100 | If `dlm` is on your PATH (e.g. installed system-wide), set `dlm.command` to `dlm`. The default `uv run dlm` works inside a `uv`-managed project. |

| 101 | |

| 102 | ## Multi-editor support |

| 103 | |

| 104 | The language server is editor-agnostic. Cookbook entries for Zed, Helix, and Neovim live in the [main repository](https://github.com/tenseleyFlow/DocumentLanguageModel/tree/trunk/docs/cookbook). |

| 105 | |

| 106 | ## Links |

| 107 | |

| 108 | - [DLM main repository](https://github.com/tenseleyFlow/DocumentLanguageModel) |

| 109 | - [dlm-lsp language server](https://github.com/tenseleyFlow/dlm-lsp) |

| 110 | - [Issues](https://github.com/tenseleyFlow/dlm-vsc/issues) |

| 111 | |

| 112 | ## License |

| 113 | |

| 114 | MIT |